Nuclear Fusion is the power of the stars. Starlight that reaches us from light years away is derived from nuclear reactions that occur at immense temperatures and pressures deep within the cores of stars. These conditions cause the soup of charged gas particles (plasma) to overcome their electrical repulsion and by the strong nuclear force, combine their atomic nuclei and form heavier elements from Helium all the way up to Iron, converting the change in nuclear binding energy into heat, electromagnetic and particle kinetic energy.

Such power has only been harnessed by humans in thermonuclear weapons, which are by far the most efficient fusion reactors we have yet constructed, where even a modest thermonuclear bomb, in which the fusion portion of the bomb is triggered by the pressure, heat and x-ray radiation of the fission part of the bomb, can release as much energy in the form of the fusion reactions as the equivalent of over 1 million tons of TNT exploding in a partially self-sustained fireball that concentrates the energy in a blast that lasts just a few seconds. Needless to say, the devastation from such a device is as wholesale as it gets for a civilization bound to the earth. The same technology however, can allow humanity to make a most important leap forward since the dawn of the atomic age, harnessing this power of the stars to provide power for continuous and clean growth of advanced civilization.

Nuclear fusion reactions require a large pulse of energy to be induced to happen. In stars, the high temperature and pressures in their cores allow this to happen, in thermonuclear weapons, as briefly discussed, the energy comes from the fission part of the weapon. In fusion reactors, everything from using magnetic fields to pinch the plasma into a doughnut to using high powered lasers to implode the plasma fuel have been used as methods have been used to achieve fusion. Confinement of the fusion fuel to reach a critical density has been the process in which fusion has been attempted in order to create self-sustaining reactions. Magnetic confinement uses powerful magnetic fields to pinch plasma into a ring or a bubble to induce a contained, self-sustaining reaction. A Tokamak is one such device that uses a magnetic field to do just that, by confining a plasma in the shape of a torus, or doughnut shape.

Experimental research of Tokamak systems began in 1956 in Kurchatov Institute, Moscow by a group of Soviet scientists led by Lev Artsimovich. Tokamaks are the most promising technology for the ultimate goal of controlled fusion to produce electricity, with new modifications of the design being developed and improved upon all the time, with potentially promising results in producing a close counterpart, perhaps even total, self sustained fusion reactions for power production (this will be discussed further in the article)

Tokamaks can provide Megawatt levels of energy output in the form of neutrons while operating at modest plasma performance. Key design breakthroughs have been achieved by harnessing the effectiveness of beam-plasma fusion in the Tokamak design.

Fusion plasma inside a Tokamak Reactor. Powerfull magnetic fields confine the plasma into a thin bubble of charged particles.

Running the Tokamak is costly however, the greatest cost of which is confining the plasma using magnetic fields. Unless the magnets are superconducting, they require a huge and continuous energy source to be fed to them all the time, which will amount to an energy supply that would exceed even the most efficient reactor. If they are superconducting, then the magnet coils would have to be made of superconductor that would only work at low temperatures to avoid cracking. Unlike the high temperature YBCO or BSCCO superconducting wires, superconducting wires for fusion plasma would require low temperature superconducting metal which needs liquid Helium, which itself is expensive and a limited resource.

Low Temperature Superconductor Wire, such as Nb3Sn, are more flexible and less brittle than current High Temperature Superconducting Ceramics however they require liquid Helium to remain superconducting.

All known High Temperature Suprconductors (HTS), i.e. superconductors which operate at temperatures above the boiling point of Liquid Nitrogen, are oxide ceramics such as YBCO which are brittle and cannot withstand high levels of tension found when high magnetic fields are generated. A HTS which is non-brittle and malleable into flexible wires that can withstand high tensions would be enormous progress.

Room Temperature superconductivity would truly revolutionize our civilization, however it is unknown if it is even possible, as the theory behind superconductivity is still incomplete, with the mechanism of type-2 superconductivity still unknown.

Liquid Helium requires Helium gas to be extracted in concentrations only found by the petroleum industry so we are still depending on fossil fuels for this resource. In this case, it is clearly the tail wagging the dog; we can't say the nuclear fusion cycle is free from fossil fuels if we need by-products of them to run our reactors.

Hence magnetic confinement seems to be a resource intensive way to generate power, at least until we discover a superconductor in a phase that

(1) Is not a brittle ceramic like YBCO or BSCCO

(2) can be made into malleable, high current carrying wires

(3) can work at Liquid Nitrogen temperatures (at the very least)

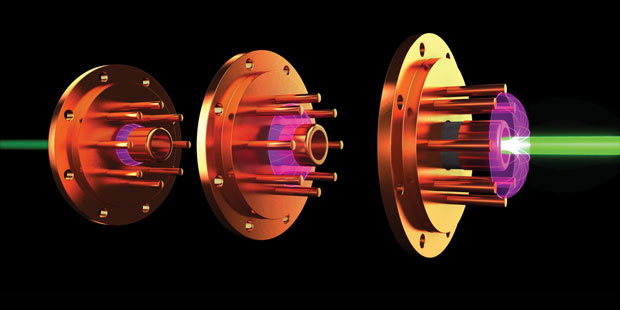

To contrast, Laser-induced fusion does not need powerful magnetic fields for confinement and therefore the problem of self-sustaining is governed simply by how long we pulse the laser on the fuel sample. In most laser fusion experiments, extremely short pulses are focused on the walls of a metal fuel capsule containing a small sphere of hygrogen, or helium, gas isotopes whose nuclei are being fused. The lasers generate temperatures of millions of degrees outside the gas capsule which in turn generate enormous pressures, along with a high flux of x-rays, that can heat and compress the gas to the point at which it can implode and generate a fusion reaction.

The high energy lasers naturally need a lot of energy to run, but Q-switching lets us create tremendous bursts of power, that last a very short time. Since nuclear reactions do not take very long, on the order of femtoseconds, the short amount of time a q-switch can boost a laser of a few hundred Watts into the Gigawatt range is actually more than enough to complete the reaction and continue it to the next and so on. This is why laser power was always the gold standard to determine the proof of the principle of the range of energies available for nuclear fusion.

(Laser fusion is discussed further in the article)

How to Power Machines with Nuclear Fire

The big question in physics and engineering terms is how can we harness the power of a nuclear weapon in a reactor to provide electricity in the same way we generate electricity using generators fixed to turbines which need steam, water or wind to move them?

Our solution to this has always stemmed from the classical idea used in fossil fuel burning, particularly coal. Namely, that by heating water from burning coal we can make steam which will move turbines. This is a highly inefficient process, as not only is heat lost by containing the water but the turbines themselves are not efficient generators and with normal conductivity there is tremendous losses from the transmission of electricity even before it is distributed from a power plant to cities.

This same architecture was adopted by Nuclear Fission reactors and despite advances with other heat exchanger technologies, such as proposed Molten Salt Reactors, no considerable effort has been made to get rid of the steam turbine design. In fact, designs have been continued to be improved on the design, despite having a definitive efficiency barrier which is given to us by the Carnot Cycle in thermodynamics.

We are using the exact same physics from the 18th century in highly sophisticated and elegantly constructed steam technology to convert electricity made from 21st century nuclear technology.

Turbines are crucial for generating electricity, and they are by far the most successful way we have at converting the raw power of nature into power for our civilization. However, by ignoring our fundamental knowledge of what happens in a nuclear reaction we have not noticed that there are other ways to gain energy from them, ways which might be more relevant for nuclear fusion where most of the energy is given off as the kinetic energy of individual particles in a state of ionized gas. We must remember that we are not dealing with a solid block of fuel, or even a dense fluid mass, we are dealing with a thin cloud of charged particles. How can we get energy from such a thing?

By classical mechanics, motion of the charged particles can also set up an electromagnetic field that can be converted to an electrical current if the particles were streamed through pickup coils. This way the energy is delivered directly to current carrying wires without having to capture the particle from the fusion plasma, thereby allowing it to be reused in a new generation of fusion events.

The electrons from the plasma will also move in an opposite direction to the charged ions in a uniform magnetic field and could therefore be directed to a metal sheet to convert directly into an electric current. However such a system would not, by itself, be the most efficient way to harvest the power.

Since sunlight is a stream of particles, called photons, we can gather power by having these particles create excitation in materials. When a photon hits a sheet of semiconductor, such as silicon panels, it will create an electron-hole pair.

By having a huge array of conductive wires across silicon we collect the drifting electric current that forms over the surface as electrons are move from the valence band to the conduction band and creates a depletion region in the material. This happens as long as the photon stream is allowed to strike the panel.

Since nuclear reactions can give off charged particles, these should also create electron-hole pairs in semiconductors and therefore a drift current can be created as a depletion region forms. A fusion plasma is filled with charged particles that continue to grow as more and more atoms are added to this soup and become charged by the nuclear reactions.

Just like the increase in laser technology reducing the scale, expense and thus flexibility of engineering large laser systems the ability to absorb radiation in semiconductor material has only increased in recent years by projects at CERN and elsewhere in the Particle Physics community. Silicon technology may hold the true key in developing technology that can convert heat and particle fluxes directly into electricity. Thermoelectic generators are one example of how this can be done for thermal radiation, radioactive Strontium betavoltaic batteries have efficiencies between 6-8% when they were used in atomic batteries and the development of Alpha Voltaics for use in nuclear energy conversion and convert charged particles from radioactive sources into an electric current.

The scientific principles of semiconductor physics are well known, but modern nano-scale technology and new wide bandgap semiconductors have created new devices and interesting material properties not previously available.

3D Silicon sensor technology may also lead to a revolution in Alpha Voltaics where electricity can be generated efficiently from charged particle beams.What now makes 3D radiation sensors one of the most radiation-hard designs is that the distance between the p+ and n+ electrodes can be tailored to match the best signal efficiency, the best signal amplitude and the best signal-to-noise or signal-to-threshold ratio to the expected non--ionizing radiation fluence.

The following diagram indicates how this is possible by comparing planar sensors – where electrodes are implanted on the top and bottom surfaces of the wafer – with 3D ones. The diagram shows how the depletion region between the two electrodes, L, grows vertically to become as close as possible to the substrate thickness, Δ. This means that there is a direct geometrical correlation between the generated signal and the amplitude of the depleted volume.

By contrast, in 3D sensors the electrode distance, L, and the substrate thickness, Δ, can be decoupled because the depletion region grows laterally between electrodes whose separation is a lot smaller than the substrate thickness. In this case, the full depletion voltage, which depends on L and grows with the increase of radiation-induced space charge, can be reduced dramatically. Meaning that the threshold to generate a voltage and an electric current is greatly reduced. This can be used to create a cascade of electron-hole pairs, commonly seen in Schottky diodes which have a very low threshold voltage. Solar cell cascading uses lower threshold voltages to achieve power generation at low illumination levels.

Schematic cross-sections of (left) a planar sensor design and (right) a 3D sensor, showing how the active thickness (Δ) and collection distance, L, are decoupled in the latter. Because the charge-collection distance in 3D sensors is much shorter – and high electric fields as well as saturation of the carrier velocity can be achieved at low bias-voltage – the times for charge collection can be much faster.

An alpha voltaic battery utilizes a radioactive substance that emits energetic alpha particles and is coupled to a semiconductor p/n junction diode. Alpha voltaics have not been technologically successful to date primarily because the alpha particles damage the semiconductor material, thus degrading the electrical output of the solar cell in just a matter of hours. The key to future development resides in the ability to limit this degradation.

Several approaches to solving this problem have been investigated. One approach uses photovoltaic devices which have good radiation tolerance such as InGaP. Another involves the use of non-conventional cell designs, such as a lateral junction n-type/intrinsic/p-type/intrinsic cell, which minimizes the effect of radiation damage on the overall cell performance.

A third approach uses an intermediate absorber which converts the alpha energy into light which can be converted by the photovoltaic junction.

Alpha-voltaic battery using an intermediate quantum dot absorber layer.

The intermediate absorbers used in this approach are inherently radiation-hard semiconducting quantum dots.

A conservative estimate of only 20% energy conversion efficiency of _-particle energy into useful photons (e.g. one 5.44 MeV _-particle results in 105 2.2 eV photons) and a monochromatic conversion efficiency of only 30% could result in the generation of up to 5 mW by a device whose area is less than 1 cm2 and which would weigh approximately 3 grams assuming 2.5 mCi of Am241 is used (i.e., 2W/Ci).

The half-life of the Am241, the alpha particle source, is over 400 years. Even with a conservative estimate on the efficiency of the quantum dot emitters due to radiation degradation, the usable lifetime of the device is orders of magnitude greater than a comparable rechargeable battery system.

The quantum dot alpha-voltaic devices would be capable of operating at low temperatures at which current battery systems would be rendered useless. They can be easily fabricated in microscale sizes which are extremely difficult for power sources such as conventional batteries. This attribute makes them extremely attractive for microsystem applications such as biomedical or MEMS sensors.

These small devices would be capable of providing low levels of power for an extremely long period of time (i.e., >100 years) and would be capable of operating over a wide range of operational environments with little if any loss of performance, most notably at extremely low temperatures (i.e., < 100 K), but also in harsh biological environments, such as inside the high temperature, high radiation flux of a fusion reactor.

But what about the external shielding? Deuterium-Tritium Nuclear reactions release energetic neutrons which will irradiate the reactor core and leave a lingering radiation in the shielding and moderator long after the reactor has ended. This will of course create nuclear waste. Although the amount of nuclear waste created is small compared to that of current Uranium reactors it is still waste and would be generated at least every 3 years for a given reactor as it is serviced. This waste needs disposing like any other form. Therefore the statement that no waste is create by a fusion reactor at all is a misleading one. Moreover, in D-T fusion, most of the energy created is in the form of kinetic energy of the neutron itself which is entirely unusable as the neutrons are so highly penetrating that they transfer no kinetic energy to heat exchangers used in producing steam for example and have no electrical charge for use in alpha or beta voltaics. Hence there is energy wasted in the reaction itself. The motivation for a reaction that produces a minimum to no neutrons is clear, but is it possible?

The answer is yes and it takes the form of Aneutronic Fusion.

What is Aneutronic Fusion?

In the classical concept of a fusion reactor, 2 isotopes of hydrogen, deuterium and tritium, are fused together under high temperature and pressure, inside a fuel capsule that physically contains the plasma or a tokamak that contains the plasma in a magnetic field.

To get an idea of the scale involved, notice the tiny little lab tech in the blue coat standing on the floor.

The ITER project utilizes the Deuterium - Tritium reaction, which is a fusion process between the two heavy isotopes of Hydrogen:

2H + 3H → 4He (3.517 MeV) + n (14.069 MeV)

The reaction produces Helium-4 and releases a high energy neutron. For fusion research the D-T reaction is more in favor than alternatives such a D-D reaction:

The D-T reaction has a higher cross section than the D-D reaction. This means that when a Deuteron and a Triton interact closely, a higher probability is present for fusion than between two Deuterons (at the same kinetic energy), i.e. a reduced Lawson criterion exists.

The reaction rate peaks at 70 keV, but has an optimum energy to initiate the reaction at 10 keV which is lower than the 15 keV for the D-D reaction.

These reduced conditions for fusion make the D-T reaction the preferred reaction of choice for fusion research with plasma confined tokamak reactors.

However, a number of disadvantages cannot be ignored, the two most crucial are:

- Tritium does not naturally occur, is radioactive and subjected to non-proliferation concerns.

- The high energetic neutron carries away 80% of the energy, which is virtually completely lost from the reaction and thus makes the reaction a source of high energy, and thus high penetrating, neutron radiation which is extremely harmful to all forms of life and can damage the reactor if not given enormous shielding.

It should be noted that the ITER project is a truly noble project in addressing that we must take it upon ourselves to make nuclear fusion a reality by the end of the century. However, it is a project that is not without criticism, some of which is justified. First of all, it is not a project designed to produce power, as sometimes claimed, as it will not be able to produce more power, in usable form, than has been put in. Although, by detector readings and mathematical manipulation it is possible to induce reactions that for a fraction of a second create more power output in the blast than has been put in, this is in a different form of energy that is not available to be converted into power to sustain the reaction. In this sense, all fusion reactors ever built or conceived have faced this criterion, with the reality being that the most efficient fusion devices ever built, capable of sustaining a fireball of nuclear plasma for more than a few seconds, are thermonuclear weapons, which are, technically, the most efficient fusion devices mankind has currently built.

Castle bravo, 15 megaton detonation, so large that it formed a 7 KM fireball in just a second.

Castle Bravo, was the United States' first dry-fuel hydrogen (fusion) bomb. The yield of 15 megatons was three times the yield of 5 megatons predicted by the scientists and engineers that developed it. The bombs high yield came from the lithium deuteride fusion fuel in a Teller-Ulam design, however the scale of the increase due to nature of the lithium isotope however this was unexpected due to a theoretical error made by designers of the device at Los Alamos National Laboratory. They considered only the lithium-6 isotope in the lithium deuteride secondary to be reactive; the lithium-7 isotope, accounting for 60% of the lithium content, was assumed to be inert. It was expected that lithium-6 isotope would absorb a neutron from the fissioning plutonium and emit an alpha particle and tritium in the process, of which the latter would then fuse with the deuterium and increase the yield in a predicted manner. Lithium-6 indeed reacted in this manner.

It was assumed that the lithium-7 would absorb one neutron, producing lithium-8 which decays (via beryllium-8) to a pair of alpha particles on a timescale of seconds—vastly longer than the timescale of nuclear detonation, so the bomb was expected to have skipped this mechanism by being exploded long before this had a chance to happen. However, when lithium-7 is bombarded with energetic neutrons, rather than simply absorbing a neutron, it captures the neutron and decays almost instantly into an alpha particle, a tritium nucleus, and another neutron. As a result, much more tritium was produced than expected, the extra tritium then fused with deuterium and producing an extra neutron. The extra neutron produced by fusion and the extra neutron released directly by lithium-7 decay produced a much larger neutron flux. The result was greatly increased fissioning of the uranium tamper and increased yield.

This resultant extra fuel (both lithium-6 and lithium-7) contributed greatly to the fusion reactions and neutron production and in this manner greatly increased the device's explosive output. The test used lithium with a high percentage of lithium-7 only because lithium-6 was then scarce and expensive; the later Castle Union test used almost pure lithium-6. Had sufficient lithium-6 been available, the usability of the common lithium-7 might not have been discovered. Therefore this story is a very important lesson in why we must learn of every potential fusion mechanism possible

Like the nuclear weapons programs of the late 1940's, 50's and 60's, ITER is also a project with an enormous budget, twice as big as CERN and with much more delays. It was also in development longer and every year it has been threatened for termination. Realistically, we cannot have an energy source which is constantly threatened to be considered not viable and wait around for it to become a reality, no matter how pleasing it may seem conceptually. We have had this problem in the past and out of it has created the hoax of cold fusion, a concept which itself wasted huge amounts of time and money, but which arose probably more out of a sense of frustration and genuine disappointment that we had failed to make fusion energy industrially possible.

Aneutronic fusion could also be conceivably tossed among cold fusion as bunk, however the fact that it had not been considered seriously among scientists and was not funded is not as worrying as having a $20 Billion experiment that promises to change the world lead to just more headscratching instead of developing experimental equipment and testing it. The question of whether or not fusion is possible on earth is an experimental question, hence experiments must be done to find out and with experiment risks will skew any cost-benefit analysis to pump for more funding, with it being not genuinely known whether results will blossom.

Another criticism of fusion is that Deuterium-Tritium fusion itself depends on the existence of a nuclear fission industry to create Tritium from Lithium-7, just as in the process that occurred in the Castle Bravo test, so we actually need several fusion plants in operation, around the globe, to make the fusion industry truly self-sustaining and this causes the paradox that for feeding a tokamak fusion reactor, the product from a fission reactor is required. This paradox can partially be resolved by installing a Lithium blanket in the fusion reactor. The neutron flux from the D-T reaction on Lithium-6 metal yields Tritium and Helium-4:

6Li + n → 4He + 3H + (4.08 MeV)

An advantage of the Lithium blanket is that neutrons from the D-T recation will be absorbed, and by doing that the blanket acts as a shielding for the neutron flux from the reactor. The problems in designing the Lithium blanket and optimizing it for the fusion process are, however, bigger than designing the actual tokamak reactor itself. The reason is that neutrons with different energies are emitted from the D-D and D-T reactions in a thermonuclear reactor and for reacting with Lithium to obtain a high Tritium breeding ratio, a mixture of Lithium isotopes will be required. Another way to induce a large Tritium breeding ratio is by doping the Lithium blanket with neutron multipliers such as Beryllium and Lead.

This all leads to genuine criticism of fusion as a viable alternative to fission, as it cannot truly be viable if it requires nuclear fission by-products, leading many to look to redesigning fission reactor technologies, in particularly Thorium, as a much more rewarding alternative to meeting real energy demands. Nevertheless, there is a prospect that aneutronic fusion fuels may help us move away from a pure dependence on nuclear fission in the future in second or third generation fusion reactors if the first generation of Deuterium-Tritium reactors are successful. In short, we must study all potential fuel sources, no matter how exotic they may seem.

The crucial fact also remains is that in D-T fusion most of the energy (on average 80%) is released in the form of an energetic neutron means that although the reaction is efficient at generating a high neutron flux, which was necessary for the high yield of thermonuclear weapons for increasing the rate of fission of the uranium tamper, it is however inefficient for generating electricity, as the cross section for capturing neutrons in most materials is very low as well as the fact that the particles are not charged so they cannot induce and electrical current in a pickup coil or semiconductor. Moreover, if the neutrons were captured radiation is given off either in the form of gamma rays or by the capturing material itself becoming a radioactive isotope. Hence D-T Fusion is not free of radioactive waste.

The Proposed Focus Fusion Reactor

Therefore, with all of these problems, it would be attractive to find fusion cycles that do not release many, if any, neutrons in their cycle. There are a few isotopes of elements which do have this property when their nuclei are fused, and can be examined in fusion reactions.

The basic premise of an aneutronic fusion reactor is to create and sustaining a reaction where there are only charged particles generated in the reaction, and no neutrons. By not creating vast amounts of high energy, and thus highly penetrating, neutrons makes the fuel even more safe to use, as all parts used in the reactor becomes almost radiation-free a few hours after the reactor is turned off. The catch is that these fuels is deemed even more difficult to ignite than tritium-deuterium fuel.

One of the designs currently under development is by the Lawrenceville Plasma Physics Focus Fusion Project, which was outlined before and can be researched further here:

The Lawrenceville Plasma Physics focus fusion reactor is composed of a capacitor supply which releases a high voltage current into the cathode and anode array - itself around 1 foot (30cm) long and 6-7 inches (15-17.7cm) outer cathode diameter.

This is constructed to allow an arc to form through the surrounding hydrogen and boron gas. The initial charge creates the plasma (individual ions of positively charged nuclei and negatively charged electrons).

The plasma organises in response to the transmitted current and related magnetic fields to produce filaments which twist in on each other to eventually create a very dense tiny plasmoid. This plasmoid, which increases the magnetic fields the plasma is subjected to, interacts on itself to produce the fusion reaction.

The most elegant aspect of this design concept of an aneutronic reactor is not only because the reactor emits no neutrons, but the charged particles are directly ejected in two opposing jets, hence there is no need for cooling water and a gas turbine, but the energy reactor creates can potentially be converted into electricity directly through electromagnetic coils generating energy directly from the electronic motion of particles in the jets and using thin sheets of metal in the walls of the reactor that captures positivly charged particles. The electrons in motion also creates the magnetic field that pinches the charged particles into the fusion jets, by means of creating a form of self-sustaining plasma called a plasmoid.

Plasmoids appear to be plasma cylinders elongated in the direction of the magnetic field. A plasmoid has an internal pressure stemming from both the gas pressure of the plasma and the magnetic pressure of the field. To maintain an approximately static plasmoid radius, this pressure must be balanced by an external confining pressure. In a field-free vacuum, for example, a plasmoid will rapidly expand and dissipate.

Plasmoids appear to be plasma cylinders elongated in the direction of the magnetic field. A plasmoid has an internal pressure stemming from both the gas pressure of the plasma and the magnetic pressure of the field. To maintain an approximately static plasmoid radius, this pressure must be balanced by an external confining pressure. In a field-free vacuum, for example, a plasmoid will rapidly expand and dissipate.From this fusion are ejected positively charged helium ions (alpha particles) and negatively charged electrons in either direction. Alpha particles travel in one direction and the electrons in the other - effectively a stream of electricity. An animation of the fusor is shown below:

These can be harvested directly in a decelerating coil arrangement reversing the technology of a particle accelerator, or replicating the idea of a transformer. The produced electric current is fed into a storage capacitor bank.

So in addition to the reactor itself needed basically just a set of capacitors to create pulses, as well as a water shield on one meter of water. The reactor is actually so simple that it could be built in a relatively small scale at around 200 megawatts and are placed close to the users in order to reduce losses in the power grid. According to calculations by the designer, inventor and mastermind behind the Focus Fusion reactor, Eric Lerner , this kind of energy if perfected could be one-fifth as expensive as today's cheapest electricity, and would probably involve a minor revolution in global energy supply if it succeeded.

If there is anything one could object to this variant of the reactor, it is that it sounds too good to be true, hence people need to be extremly tentative about how much can be delivered from design and concept alone. The physics may often be sound with design specifications, but the cost-benefit analysis that helps self-correct any engineering project may ultimately find the reactor unsound in practical terms. Nonetheless, it is a reasonable project to test fusion using a new technique on an old idea.

The following chart is 3 years old, but shows that their small reactor already achieved higher pressure and electron velocity than the large tokamak reactor did. The creation of the plasmoid itself is given at the energy level labelled with a blue dot in the p-B11 reactor diagram (FRC). The black dots indicate what is required for a fusion reaction with boron hydrogen, and the blue-green dot (TFTR) shows what tokamak reactors had succeeded then. The red dot (DPF = dense plasma focus) indicates the result of the dense plasma focus.

So we can indeed wish Focus Fusion the best in their future work, which we can follow with excitement that will, if nothing else, help move the question along of whether fusion is achievable on earth without neutrons hogging all of the power away, in a different approach to te gargantuan (both in size and in cost) tokamak D-T fusion projects, like ITER and others. Below is a picture of their new experimental reactor that has already managed to create a hydrogen-boron fusion. schedule to increase the impact and scientific prove that it is possible to achieve a net effect.

The project proposes, among other things,another additional method (apart from the plasmoid method) designed to create fusion power with boron and hydrogen. This variation is to try to compress the ions through static electricity in a vacuum chamber, and the results are shown with purple dot (IEC). It seems, in other words that the dense plasma focus method leads development currently.

The focus fusion electrical arcs in a pulse fuse a sample of boron-containing fuel in a target with a beam of protons. As Eric Lerner puts it provides this technique creates a "quasar in a bottle."

Perhaps one could liken this to how smoke rings can be created by the person who is skilled enough with the barrel. Scientists also suspect that this phenomenon is also related to the elusive ball lightning that scientists really do not yet have a clear understanding of. The idea is in all cases to two plasma rings of this nature collided, creating a kind of dynamo that both keeps the gases ionised in a plasma as well as confined in space. This relates to another fusor design, in which just that happens, using magnetic fields to pass through a induced flowing plasma down the side of a vacuum vessel causing 2 plasma lobes to combine and induce a self-organizing, self-sustaining confined plasma state in a device called the spheromak, which is discussed in detail next.

Self-Sustaining Plasma Reactors: The Spheromak.

The magnetized plasmas in Lawrence Livermore’s Sustained Spheromak Physics Experiment (SSPX) are much smaller and far shorter-lived than their celestial cousins in solar flares and prominances, but the two varieties, nevertheless, share many of the same properties. If the Focus Fusion DPF reactor can be called a "quasar in a bottle", then the Spheromak can certainly be called a "solar flare in a bottle".

In both instances, fluctuating magnetic fields and plasma flows create a dynamo that keeps the ionized hydrogen plasma alive and confined in space. Magnetic fields pass through the flowing plasma and eventually touch one another and reconnect. When a reconnection occurs, it generates more plasma current and changes the direction of the magnetic fields to confine the plasma. This “self-organizing” dynamo is a physical state that the plasma forms naturally.

Magnetic reconnection is key for confining the plasma in space and sustaining it over time. In an experimental situation such as SSPX, an initial electrical pulse is applied across two electrodes, forming a plasma linked by a seed magnetic field. Reconnection events generated by the plasma itself convert the seed field into a much stronger magnetic field that shapes the plasma and prevents it from touching the walls of the spheromak’s vessel.

In both instances, fluctuating magnetic fields and plasma flows create a dynamo that keeps the ionized hydrogen plasma alive and confined in space. Magnetic fields pass through the flowing plasma and eventually touch one another and reconnect. When a reconnection occurs, it generates more plasma current and changes the direction of the magnetic fields to confine the plasma. This “self-organizing” dynamo is a physical state that the plasma forms naturally.

Magnetic reconnection is key for confining the plasma in space and sustaining it over time. In an experimental situation such as SSPX, an initial electrical pulse is applied across two electrodes, forming a plasma linked by a seed magnetic field. Reconnection events generated by the plasma itself convert the seed field into a much stronger magnetic field that shapes the plasma and prevents it from touching the walls of the spheromak’s vessel.

| Inside the SSPX’s flux conserver, the hot plasma forms a doughnut shape called a torus. |

The Laboratory’s interest in creating such plasmas and learning how they function derives from Livermore’s long history of exploring fusion energy as a source of electrical power. If a self-organizing plasma can be made hot enough and sustained for long enough to put out more energy than was required to create it, the plasma could prove to be a source of fusion energy. For several decades, researchers have been examining both magnetic and inertial confinement methods for generating fusion energy. The magnetized plasmas of Livermore’s spheromak represent one possible route to a source of abundant, inexpensive, and environmentally benign energy. (See the box below.)

Now semiretired, physicist Bick Hooper was assistant associate director for Magnetic Fusion Energy in the mid-1990s when he participated in a review of data from Los Alamos National Laboratory’s spheromak experiments conducted in the early 1980s. The reanalysis suggested the plasma’s energy was confined up to 10 times better than originally calculated and that plasma confinement improved as the temperature increased. The reviewers theorized that as temperatures increase in the plasma, electrical resistance decreases and energy confinement improves, promoting the conditions for fusion. In light of this reanalysis, the scientific community and the Department of Energy (DOE) decided to pick up where the Los Alamos experiments had left off. SSPX was designed to determine the spheromak’s potential to efficiently create hot fusion plasmas and hold the heat.

The physics of SSPX is the same as that of solar coronal loops and flares, which can produce fusion reactions as these loops can reach millions of degrees Kelvin and are assumed to be the source of the million degree temperatures found in the solar atmosphere, the Corona, which is far higher than the surface of the Sun, typically 5000 Kelvin. Since the fusion reactors here are occuring at a scale seveal orders smaller than the size of the sun itself and also occuring due to magnetic field conditions pinching the plasma which we can replicate, and even surpass, in the lab then studying the fusion process in the solar coronal loops is highly important.

Early investment in research on spheromak plasmas by Livermore’s Laboratory Directed Research and Development Program was instrumental in the decision to build SSPX at Livermore. (See S&TR, December 1999,Experiment Mimics Nature’s Way with Plasma.) Since 1999, when SSPX was dedicated, a team led by physicist Dave Hill has boosted the plasma’s electron temperature from 20 electronvolts to about 350 electronvolts, a record for a spheromak. “We’re still a long way from having a viable fusion energy plant,” acknowledges Hill. “For that, we would need to reach at least 10,000 electronvolts.” However, conditions obtained to date are a significant step toward achieving fusion with a spheromak.

“The science of these plasmas is fascinating,” says Hill. “Not only might they prove useful for producing fusion energy, but also their physics is essentially the same as the solar corona, interplanetary solar wind, and galactic magnetic fields. However, we still have much to learn about magnetized plasmas. For instance, we do not completely understand how magnetic dynamos work. We know that Earth’s magnetic core operates as a dynamo, but scientists have barely begun to model it. Magnetic reconnection, essential for containing and sustaining the plasma, is another phenomena that is not well understood.”

Livermore’s spheromak research is aimed primarily at increasing the plasma’s temperature and gaining a better understanding of the turbulent magnetic fields and their role in sustaining the plasma. “We need some turbulence to maintain the magnetic field, but too much turbulence kills the plasma,” says Livermore physicist Harry McLean, who is responsible for diagnostics on SSPX. “It’s a complicated balancing act.”

Because scientists want to learn more about what is going on inside the fusion plasma and find ways to improve its behavior, experiments on SSPX are augmented by computational modeling using a code called NIMROD. The code was developed by scientists at Los Alamos, the University of Wisconsin at Madison (UWM), and Science Applications International Corporation. Results from SSPX experiments and NIMROD simulations show good agreement. However, NIMROD simulations are computationally intensive. Modeling just a few milliseconds of activity inside the spheromak can take a few months on a parallel supercomputing cluster.

Collaborators in the SSPX venture include the California Institute of Technology (Caltech), UWM, Florida A&M University, University of Chicago, Swarthmore College, University of Washington, University of California at Berkeley, and General Atomics in San Diego, California. Through the university collaborations, an increasing number of students are working on SSPX and making important contributions. Livermore is also a participant in the National Science Foundation’s (NSF’s) Center for Magnetic Self-Organization in Laboratory and Astrophysical Plasmas.

A Spheromak Up Close

When spheromak devices were first conceived in the early 1980s, their shape was in fact spherical. But now the vessel in which the plasma is generated, called a flux conserver, is cylindrical in shape. Inside the flux conserver, the swirling magnetized, ionized hydrogen looks like a doughnut, a shape known as a torus.

Livermore physicist Reg Wood is operations manager for SSPX and managed the team that built the device in the late 1990s. Hooper and others were responsible for its design. According to Wood, “The design incorporates everything anyone knew about spheromaks when we started designing in 1997. The injector has a large diameter to maximize the electrode surface area. The shape of the flux conserver and the copper material used to form it were selected to maximize the conductivity of the walls surrounding the plasma. The vessel would be somewhat more effective if we had been able to build it with no holes. But we needed to be able to insert diagnostic devices for studying the plasma.

The earliest SSPX experiments in 1999 were not a success. “The first few yielded no plasma at all,” says Wood. The electron temperature in the first plasma was just 20 electronvolts, but it has climbed steadily ever since.

Getting a spheromak plasma started requires a bank of capacitors to produce a high-voltage pulse inside a coaxial gun filled with a small seed magnetic field. Two megajoules of energy zap a puff of hydrogen, and a plasma then forms inside the gun with a now much larger magnetic field, called a helicity. A lower current pulse from a second capacitor bank helps to sustain the magnetic field.

The magnetic pressure then drives the plasma and the magnetic field within it downward into the flux conserver. As the plasma continues to expand further down into the vessel, magnetic fluctuations increase until magnetic field lines abruptly pinch off and reconnect.

The temperature is also nonuniform, hotter at the center and cooler at the walls. In this initial chaos, many small and large reconnection events occur until an equilibrium is reached. If the plasma and its confining magnetic field form a smooth, axisymmetric torus, a high-temperature, confined plasma can be sustained. (see next diagram for more visual insight)

A certain amount of turbulence in the plasma’s interacting magnetic fields is essential for the dynamo to sustain and confine the plasma. But magnetohydrodynamic instability can cause small fluctuations and islands in the magnetic fields, undercutting axisymmetry and lowering confinement and temperature. Controlling fluctuations is key. “We want a nice tight torus,” says Hooper, “but if it is too tight, that is, it has too little fluctuation, electrical current can’t get in. We want to hold in the existing energy and also allow in more current to sustain the magnetic fields.” Even under the best conditions, plasmas in SSPX have lasted a maximum of 5 milliseconds.

The team pulses SSPX 30 to 50 times per day, usually three days a week, and each experiment produces an abundance of data. “Our ability to run experiments rapidly outstrips our ability to analyze the data,” notes McLean.

|

| (a) An initial electrical pulse is applied across two electrodes (the inner and outer walls of the flux conserver), forming a plasma linked by a seed magnetic field. (b) In these cutaway views, the growing plasma expands downward into the flux conserver, which is a meter wide and a half meter tall. Magnetic reconnection events convert the seed magnetic field into a much stronger magnetic field that sustains and confines the plasma. |

The plasma formed in a spheromak is highly sensitive to many kinds of perturbations. For example, if a diagnostic probe is inserted into the hot plasma, the probe’s surface can be vaporized, which will introduce impurities and cause the plasma’s temperature to drop. Another challenge is that the plasma’s fast motion, high heat, and low emissivity can damage cameras and similar optical devices if they are mounted too closely. Therefore, few direct measurements are possible.

Most diagnostic devices are mounted at the median plane of the vacuum vessel housing the flux conserver, with ports allowing access into the flux conserver. Probes and magnetic loops at the outer wall of the flux conserver affect the plasma only minimally. Spectrometers look for light emission characteristic of impurities. Data from a series of devices that measure the magnetic fields around the plasma as well as the plasma’s temperature and density are fed into CORSICA, a computer code that infers the plasma’s internal electron temperature, electrical current, and magnetic fields. (See S&TR, May 1998, Corisica: Integrated Simulations for Magnetic Fusion Energy.)

The primary diagnostic tool shines a laser beam through the plasma to scatter photons off electrons in the plasma—a process known as Thomson scattering. The scattered light is imaged at 10 spots across the plasma onto 10 optical fibers, which transport the light into polychrometer boxes commercially produced by General Atomics. Detecting the light’s spread in wavelength provides temperature measurements from 2 to 2,000 electronvolts and is the best way yet to infer temperature with minimal disturbance to the plasma.

| A camera captures (false-color) images of the plasma forming inside the flux conserver. (a) The plasma begins to balloon out of the injector gun at about 35 microseconds and (b) reaches the bottom of the flux conserver at 40 microseconds. (c) A column forms at about 50 microseconds. (d) At 80 microseconds, the column bends, which researchers think may precede an amplification of the magnetic field. |

Carlos Romero-Talamás, a Livermore postdoctoral fellow since February, developed a way to insert the lens for a high-speed camera up close to the SSPX plasma as part of his Ph.D. thesis for Caltech. This camera, installed 3 years ago, offers the most direct images of the plasma. Three ports mounted at the vessel’s median plane allow the camera to be moved to take wide-angle, 2-nanosecond images from different viewpoints. An intensifier increases the brightness of the images from the short exposures.

Images of the plasma during the first 100 microseconds of its lifetime show the central column in the torus forming and bending (see the sequence in the figure above). At 100 microseconds, the electrical current continues to increase, making the plasma highly ionized and too dim to see. “Unfortunately,” says Romero-Talamás, “we can’t see into the plasma as the column is ‘going toroidal.’ It’s the most interesting part of the process.”

As the current falls and is set to a constant value, the flux becomes more organized. The contrast improves, and images reveal a stable plasma column. Measurements of the column’s diameter based on these images compare well with the magnetic structure computed by the CORSICA code. At about 3,800 microseconds, the column expands into a messy collection of filaments and then reorganizes itself by 3,900 microseconds. The team is unsure why this process occurs.

Measurements of local magnetic fields within the plasma are not available with existing diagnostics. But they are necessary to make better sense of some of the camera’s images and to help determine when and where reconnection occurs. More magnetic field data may also show whether the bending of the plasma column precedes reconnection. A long, linear probe to measure local magnetic fields in the plasma was installed this summer. If the probe survives and does not perturb the plasma beyond acceptable levels, two more probes will be installed. The three probes together will provide a three-dimensional (3D) picture of changes in the torus’s central column.

|

| Measurements of electron temperature and density are obtained using Thomson scattering. This 1-micrometer-wavelength pulsed laser sends photons into the plasma where they scatter off free electrons. Data from 10 spots across the plasma are collected in 10 polychrometer boxes behind physicist Harry McLean. |

Help from Supercomputers

The design of a spheromak device is relatively simple, but the behavior of the plasma inside is exceedingly complex. Diagnostic data about the plasma are limited, and CORSICA calculations, although valuable, reveal a picture of the plasma that is restricted to be cylindrically symmetric. Obtaining dynamic 3D predictions is possible only with simulations using the most powerful supercomputers. In addition to an in-house cluster, the team also uses the supercomputing power of the National Energy Research Scientific Computing (NERSC) Center at Lawrence Berkeley National Laboratory.

Livermore physicists Bruce Cohen and Hooper, working closely with Carl Sovinec of UWM, one of the developers of NIMROD, are using NIMROD to simulate SSPX plasma behavior. They and their collaborators use simulations to better understand and improve energy and plasma confinement.

To date, the team has successfully simulated the magnetics of SSPX versus time. The differences between the experimental data and simulations are at most 25 percent and typically are much less. The team found no major qualitative differences in the compared results, suggesting that the resistive magnetohydrodynamic physics in the code is a good approximation of the actual physics in the experiment. More recently, with improvements in the code, they have been able to compute temperature histories that agree relatively well with specific SSPX data. Even so, because of the complexity of spheromak physics, NIMROD still cannot reproduce all the details of spheromak operation.

Cohen notes that, “Simulation results are tracking SSPX with increasing fidelity.” The simulations are using more realistic parameters and improved representations of the experimental geometry, magnetic bias coils, and detailed time dependence of the current source driving the plasma gun. The latest simulations confirm that controlling magnetic fluctuations is key to obtaining high temperatures in the plasma.

The design of a spheromak device is relatively simple, but the behavior of the plasma inside is exceedingly complex. Diagnostic data about the plasma are limited, and CORSICA calculations, although valuable, reveal a picture of the plasma that is restricted to be cylindrically symmetric. Obtaining dynamic 3D predictions is possible only with simulations using the most powerful supercomputers. In addition to an in-house cluster, the team also uses the supercomputing power of the National Energy Research Scientific Computing (NERSC) Center at Lawrence Berkeley National Laboratory.

Livermore physicists Bruce Cohen and Hooper, working closely with Carl Sovinec of UWM, one of the developers of NIMROD, are using NIMROD to simulate SSPX plasma behavior. They and their collaborators use simulations to better understand and improve energy and plasma confinement.

To date, the team has successfully simulated the magnetics of SSPX versus time. The differences between the experimental data and simulations are at most 25 percent and typically are much less. The team found no major qualitative differences in the compared results, suggesting that the resistive magnetohydrodynamic physics in the code is a good approximation of the actual physics in the experiment. More recently, with improvements in the code, they have been able to compute temperature histories that agree relatively well with specific SSPX data. Even so, because of the complexity of spheromak physics, NIMROD still cannot reproduce all the details of spheromak operation.

Cohen notes that, “Simulation results are tracking SSPX with increasing fidelity.” The simulations are using more realistic parameters and improved representations of the experimental geometry, magnetic bias coils, and detailed time dependence of the current source driving the plasma gun. The latest simulations confirm that controlling magnetic fluctuations is key to obtaining high temperatures in the plasma.

| Toward the end of the plasma’s lifetime, its central column may become (a) a messy collection of filaments and then (b) reorganize itself. (Images are false color.) |

SSPX is one of four experiments participating in the NSF Center for Magnetic Self-Organization in Laboratory and Astrophysical Plasmas. The other three experiments are at UWM, Princeton Plasma Physics Laboratory, and Swarthmore College. Because scientists want to learn more about how magnetic structures on the Sun and elsewhere in the universe rearrange themselves and generate superhot plasmas, the experiments focus on various processes of magnetic self-organization: dynamo, magnetic reconnection, angular momentum transport, ion heating, magnetic chaos and transport, and magnetic helicity conservation and transport.

| Time histories of data from an SSPX experiment (left column) and a NIMROD simulation (right column) show considerable similarity. Shown here are the (a) injector voltage and (b) poloidal magnetic field measured by a probe near the midplane of the flux conserver (n = 0 denotes the toroidally averaged edge magnetic field). These data are for the first 30 microseconds of a plasma’s lifetime, the time during which a plasma is being formed. |

Ryutov is not alone in wanting SSPX to produce a higher temperature plasma. Anything closer to fusion temperatures is a move in the right direction. The Livermore team was able to make the leap from 200 to 350 electronvolts by learning how to optimize the electrical current that generates the magnetic fields. However, achieving still higher temperatures will require new hardware. Today, a larger power system that includes additional capacitor banks and solid-state switches is being installed. More current across the electrodes will increase the magnetic field, which will translate into a considerable increase in the plasma’s temperature, perhaps as high as 500 electronvolts. The solid-state switches offer the additional benefit of doing away with the mercury in the switches now being used.

With the larger power source, the team can better examine several processes. They hope to understand the mechanisms that generate magnetic fields by helicity injection. They will explore starting up the spheromak using a smaller but steadier current pulse to gradually build the magnetic field. A higher seed magnetic field may improve spheromak operation. Data from both simulations and experiments also indicate that repeatedly pulsing the electrical current may help control fluctuations and sustain the plasma at higher magnetic fields.

The team hopes to add a beam of energetic neutral hydrogen particles to independently change the temperature of the plasma core. Besides adding to the total plasma heating power and increasing the peak plasma temperature, the beam would also allow the team to change the core’s temperature without changing the magnetic field. The group will then have a way to discover the independent variables that control energy confinement and pressure limits.

Only by understanding the complex physics of spheromak plasmas can scientists know whether the spheromak is a viable path to fusion energy. The potential payoff—cheap, clean, abundant energy—makes the sometimes slow progress worthwhile. At the moment, the science occurring within a spheromak is well ahead of researchers’ understanding. But this team is working hard to close that gap.

With the larger power source, the team can better examine several processes. They hope to understand the mechanisms that generate magnetic fields by helicity injection. They will explore starting up the spheromak using a smaller but steadier current pulse to gradually build the magnetic field. A higher seed magnetic field may improve spheromak operation. Data from both simulations and experiments also indicate that repeatedly pulsing the electrical current may help control fluctuations and sustain the plasma at higher magnetic fields.

The team hopes to add a beam of energetic neutral hydrogen particles to independently change the temperature of the plasma core. Besides adding to the total plasma heating power and increasing the peak plasma temperature, the beam would also allow the team to change the core’s temperature without changing the magnetic field. The group will then have a way to discover the independent variables that control energy confinement and pressure limits.

Only by understanding the complex physics of spheromak plasmas can scientists know whether the spheromak is a viable path to fusion energy. The potential payoff—cheap, clean, abundant energy—makes the sometimes slow progress worthwhile. At the moment, the science occurring within a spheromak is well ahead of researchers’ understanding. But this team is working hard to close that gap.

Why laser fusion is important.

Laser technology and silicon technology decrease in price every year. Until 1968, visible and infrared LEDs, made of high purity silicon carbide crystals, were extremely costly, in the order of US$200 per unit, Now, they are less than 1 cent each. Conversely, the cost of Niobium and Titanium used in superconducting magnet coils has increased dramatically since then. Liquid Helium is also rising in cost dramatically and may actually run out before fusion itself becomes possible.

With new processes, such as growth of Gallium Nitride on Silicon to produce Blue Lasers developed in the early 2000's, as used in blue ray laser technologies, the cost of producing powerful lasers, based on silicon will continue to decrease. The same is also true as we scale up to more powerful lasers, as the cost of producing these facilities has also gone down considerably and have been shown to be much more able to cope with budget cuts and constraints. Working with full resources, these facilities can produce very amazing laboratories indeed.

The world's most powerful laser system at the National

Ignition Facility at Lawrence Livermore Labs can deliver an ultrashort laser

pulse, 5x10^-11 seconds long, which delivers more than 500 trillion watts

(terawatts or TW) of peak power and 1.85 megajoules (MJ) of ultraviolet laser

light to its target.

In context, 500 terawatts is 1,000 times more power than the

United States uses at any instant in time, and 1.85 megajoules of energy is

about 100 times what any other laser regularly produces today.

The shot validated NIF's most challenging laser performance

specifications set in the late 1990s when scientists were planning the world's

most energetic laser facility. Combining extreme levels of energy and peak

power on a target in the NIF is a critical requirement for achieving one of

physics' grand challenges -- igniting hydrogen fusion fuel in the laboratory

and producing more energy than that supplied to the target.

The first step in achieving an experimental fusion reaction

is to induce inertial confinement of a mixture of Deuterium and Tritium

(isotopes of hydrogen) at high enough densities so that their is a

self-sustaining reaction. such a reaction requires a large cross-section of

individual nuclei which can only occur in a high density plasma.

Various methods of achieving this have included using the

Z-Pinch Process to create high energy X-rays to induce the confinement in fuel

pellets,a so-called Z-Machine. Another fusion method involves using a uniform

plasma confined in a collapsing magnetic field, called a Tokamak or a Toroidal

Nuclear Fusion Reactor.

A lot of experimental results have come from using high

energy laser facilities such as The National Ignition Facility, not only for

fusion physics but also in the test of nuclear weapons eliminating the need for

ground or sea tests of thermonuclear weapons; all the tests can be done in a

laser ignition facility creating minimum effects to the environment.

For commercial Nuclear Fusion, the Tokamak Design is the

best design for achieving a self-sustaining fusion reaction by having the

toroidal field create a "bottle" of fusion plasma. Such a reactor

would have to be very large to achieve critical mass for self-sustaining fusion

and by far the International Experimental Reactor (ITER) in France is the best

facility for testing the viability of an energy generating reactor.

Extracting the energy from the reaction is a different

matter and probably will involve the invention of a high temperature

superconducting heat exchanger or confined superfluid technology to become an

efficient source of power.

So far the best method of heat extraction from a proposed

Nuclear Fusion Reactor Core would be an oxide alloy of a metal with a high

cross-section for gamma rays and a high melting point for absorbed infrared;

hence an alloy of Tungsten dipped into the fusion reactor plasma is the best

form of fusion heat exchanger available with current technology.

The exploration of other fusion reactions which utilise

fuels easier to access is also another major problem in developing an efficient

fusion reaction, reactions with Helium-3 and even a man-made

Carbon-Nitrogen-Oxygen, CNO, cycle have been proposed.

Even the use of low-energy muons to catalyse the reaction

have been proposed, though will be probably a long way off until an

cost-efficient muon generator is developed.

In NIF's laser fusion, the lasers fired within a few

trillionths of a second of each other onto a 2-millimeter-diameter target. The

total energy matched the amount requested by shot managers to within better

than 1 percent.

The interesting thing about laser fusion is that, if you

make the laser pulses short enough - on the order of a few hundred attoseconds

say, you could in principle make a laser that would skip electronic transitions

and just manipulate the nuclei of the atoms. This would mean there would be no

blast from the laser itself, just from the nuclear reactions. This would give the

highest efficiency possible of inducing fusion and the highest level of

control, since all of the radiation emitted would be from the laser pulse.

1999 Nobel Prize in Chemistry was warded for using

femtosecond lasers to observe and control chemical reactions of individual

molecules. Imagine what progress could be done using even shorter laser pulses

to control the nuclear reactions. In the future it may even be possible to

perform subatomic physics with lasers and go beyond the Schwinger Limit and create

any high energy particle we want from the vacuum. This would replace large

accelerators for particle physics and could allow mass production of some

unstable particles for scientific use.

With existing technology, optical systems can be constructed that can continuously switch the beam across

the various amplifier and oscillator cavities, raising the Q-factor and the ratio of

the stored to the dissipated energy in the cavity changes from a high to a low

value. Because the oscillations build up very rapidly, there is a huge releasing

of the accumulated energy in a short time. The laser pulse dies out when the

excited state population is depleted. By this method the Q-switch allows the

laser output to be restricted to a short-duration pulse.

The NIF system has 2 main switching elements, the master oscillator

Q-switch basically functions as an electro-optical shutter changing its

refraction index the laser beam is exposed to at certain voltages

In the master oscillator, the output energy is deliberately

kept small here (10−3 to 10−1 J) to have a better control of the laser pulse.

In the next step a telescope system magnifies the radius of

the laser beam that leaves the oscillator before it enters the system of

amplifiers.The beam radiance coming from the oscillator

pulse is increased by a series of amplifiers. The radiance is enlarged by a

factor of 104 to 108 with pulse energies then in the range of 10 to 105 J.

How is this massive increase in pulse energy achieved? In

the amplifier the medium has to be pumped and a population inversion achieved

before the beam actually enters the medium.This pumping is done from the outside. In the case of a

standard neodymium laser cavity the population inversion can be done by using xenon flash lamps.

However, the efficiency of the energy conversion from these

flash lamps to gain in pulse energy is very low- 1-2%. Therefore new

technological developments are urgently needed in this area.

New techniques using diode lasers as pumping systems provide

hope that up to 40% efficiency could eventually be achieved.

In any case, the beam is sent through these regions of high

population inversion in order to create an avalanche of laser excitations at more

or less the same fundamental frequency, however the spectrum is due to broaden

a little (chirping) which requires the beam to be shaped by temporal

mode-locking. The beam is then amplified in the cavity by reflecting the beam continuously

to create a standing wave. The beam is also Q-switched here because the system

also acts as a frustrated total internal reflection (FTIR) Q-switching which

also shortens the pulse again considerably, concentrating the energy in order to skip the electronic transitions and induce nuclear reactions. This requires very high power laser pulses irradiated on the walls of a fuel sample in order to create a high flux of x-rays that can create a significant radiation pressure to induce fusion.

X-ray laser sources themselves are therefore a potential technology for use in fusion, and concentrated x-ray sources can be created in situations where lasers interact within a medium such that there is an effective plasma of electrons such that coherent addiation of x-rays can be induced.

This High Harmonic Generation procedure is very difficult to induce, but has been done by high power lasers to induce a plasma (either in a gas waveguide or a metal surface) and create electron populations that can oscillate coherently and create transitions that generate a coherent stream of x-rays that can interfere constructively in a soliton wave.

Therefore, a device that not only can sustain a plasma but also irradiate it with a laser designed to produce x-rays in the plasma to implode it in a fusion reaction is a potential fusor design that must be considered aswell. X-ray optics is, needless to say, much more complex than standard laser frequencies however the most universal material for x-ray optics happens to be the material of choice for fusor cathodes in the dense plasma focus and spheromak desings, namely beryllium.

Beryllium, due to its low-Z number has a high transparency to X-rays, hence a device that is made out berylium which is designed to produce and sustain a plasma for a reasonable period of time, such as a spheromak, could in principle have the plasma irradiated with X-rays produced on the walls of the spheromak by means of an external high power laser pulse, produced from asource outside the reactor vessel. This would then allow for the plasma produced to be imploded in pockets and induce fusion reactions.

Such devices are purely speculative at this state, with many design incarnations still to go before the right one comes out, as it turns out that even ignoring by the engineering difficulties and turning back to the fundamental physics there are many different ways at which aneutronic fusion can occur, the mechanisms of which are normally found in the nuclear cycles of stars that are outside the main sequence. So to have any chance of replicating fusion they must be studied and the most viable mechanisms must be filtered from unviable mechanisms.

The most interesting aneutronic fusion reactions are Proton-Boron-11 Fusion and Helium 3 Fusion, and it is up to scientific experiment and engineering development to decide if these are viable or not.X-ray laser sources themselves are therefore a potential technology for use in fusion, and concentrated x-ray sources can be created in situations where lasers interact within a medium such that there is an effective plasma of electrons such that coherent addiation of x-rays can be induced.

This High Harmonic Generation procedure is very difficult to induce, but has been done by high power lasers to induce a plasma (either in a gas waveguide or a metal surface) and create electron populations that can oscillate coherently and create transitions that generate a coherent stream of x-rays that can interfere constructively in a soliton wave.

Therefore, a device that not only can sustain a plasma but also irradiate it with a laser designed to produce x-rays in the plasma to implode it in a fusion reaction is a potential fusor design that must be considered aswell. X-ray optics is, needless to say, much more complex than standard laser frequencies however the most universal material for x-ray optics happens to be the material of choice for fusor cathodes in the dense plasma focus and spheromak desings, namely beryllium.

Beryllium, due to its low-Z number has a high transparency to X-rays, hence a device that is made out berylium which is designed to produce and sustain a plasma for a reasonable period of time, such as a spheromak, could in principle have the plasma irradiated with X-rays produced on the walls of the spheromak by means of an external high power laser pulse, produced from asource outside the reactor vessel. This would then allow for the plasma produced to be imploded in pockets and induce fusion reactions.

Such devices are purely speculative at this state, with many design incarnations still to go before the right one comes out, as it turns out that even ignoring by the engineering difficulties and turning back to the fundamental physics there are many different ways at which aneutronic fusion can occur, the mechanisms of which are normally found in the nuclear cycles of stars that are outside the main sequence. So to have any chance of replicating fusion they must be studied and the most viable mechanisms must be filtered from unviable mechanisms.

Proton-Boron-11 Fusion

The p-B11 reaction is considered as an aneutronic fusion reaction with a peak energy at 123 keV and (nevertheless) a neutron emission rate of 0.001, i.e. every thousand fusion reactions will yield one neutron. The reaction yields 3 Helium-4 nuclei and energy:

The p-B11 reaction is considered as an aneutronic fusion reaction with a peak energy at 123 keV and (nevertheless) a neutron emission rate of 0.001, i.e. every thousand fusion reactions will yield one neutron. The reaction yields 3 Helium-4 nuclei and energy:

p(+) + 11B → 3 X 4He + (8.7 MeV)

At first sight with one Boron-11 nucleus delivering 3 Helium-4 nuclei the reaction looks like a fission reaction. This is, however, not the case because in the first stage of the reaction an instable Carbon-12 nucleus is formed by fusion, which is proton/neutron instable and in the second stage immediately decays into three α-particles, which are Helium-4 nuclei (2 protons and two neutrons).

The claimed aneutronicity of the reaction is valid for the main reaction, but side reactions may yield low energy neutrons or hard gamma rays.

α + 11B → 14N + n

1p + 11B → 11C + n

1p + 11B → 12C + Υ

The peak energy of the reaction is at 600 keV considered as quite high and it will require a totally different reactor design. The advantages of being an aneutronic reaction, the high availability of Boron and the fact that the reaction product consists of charged particles (enabling direct conversion into electricity) makes the reaction quite desirable for commercial fusion applications, though the engineering problems to design a p-B11 reactor are currently quite challenging.

Boron is present in huge quantities as a natural resource. The proven mineral mining reserves are more than one billion metric tonnes and current yearly production ia about four million tonnes. Turkey has 72% of the world's known deposits, followed by the U.S.A. as the second large source.

The boron fuel of choice for testing aneutronic fusion is either a carbon-based boron ester composite for laser-assisted aneutronic fusion for a proof of principle test and then for large scale fusion reactions using boranes, such as decaborane, in which the full molecule decomposes in a plasma, yielding monatomic boron ions to fuse with a proton beam.

Decaborane, aka Boron Hydride: B10H14

Decaborane is also potentially useful for low energy ion implantation of boron in the doping of semiconductors, hence there are technological spin-offs from this research well beyond the utility of fusion power. It has also been considered for plasma-assisted chemical vapor deposition for the manufacture of boron-containing thin films. As if often the case with plasma physics in general, most nuclear fusion research is essentially researching new technology for use in semiconductor and thin film production, testing new plasma technologies for etching and chemical deposition and so on and is not directly related to mainstream power production. Aneutronic fusion will almost certain be used as a means of single-atom implantation which would allow for single-digit nanometer-sized transistors. So what is called fusion energy research is often just semiconductor research in disguise.

In fusion research itself, the neutron-absorbing nature of boron has led to the use of these thin boron-rich films to "boronize" the walls of the fusion vacuum vessel to reduce recycling of particles and impurities into the plasma and improve overall performance. Hence, whatever way you look at it, the use of boron in fusion research has rich scientific and technological applications.

The p-B11 reaction is considered as an aneutronic fusion reaction with a peak energy at 123 keV and (nevertheless) a neutron emission rate of 0.001, i.e. every thousand fusion reactions will yield one neutron. The reaction yields 3 Helium-4 nuclei and energy:

The p-B11 reaction is considered as an aneutronic fusion reaction with a peak energy at 123 keV and (nevertheless) a neutron emission rate of 0.001, i.e. every thousand fusion reactions will yield one neutron. The reaction yields 3 Helium-4 nuclei and energy:p(+) + 11B → 3 X 4He + (8.7 MeV)

At first sight with one Boron-11 nucleus delivering 3 Helium-4 nuclei the reaction looks like a fission reaction. This is, however, not the case because in the first stage of the reaction an instable Carbon-12 nucleus is formed by fusion, which is proton/neutron instable and in the second stage immediately decays into three α-particles, which are Helium-4 nuclei (2 protons and two neutrons).

The claimed aneutronicity of the reaction is valid for the main reaction, but side reactions may yield low energy neutrons or hard gamma rays.

α + 11B → 14N + n

1p + 11B → 11C + n

1p + 11B → 12C + Υ

The peak energy of the reaction is at 600 keV considered as quite high and it will require a totally different reactor design. The advantages of being an aneutronic reaction, the high availability of Boron and the fact that the reaction product consists of charged particles (enabling direct conversion into electricity) makes the reaction quite desirable for commercial fusion applications, though the engineering problems to design a p-B11 reactor are currently quite challenging.

Boron is present in huge quantities as a natural resource. The proven mineral mining reserves are more than one billion metric tonnes and current yearly production ia about four million tonnes. Turkey has 72% of the world's known deposits, followed by the U.S.A. as the second large source.

The boron fuel of choice for testing aneutronic fusion is either a carbon-based boron ester composite for laser-assisted aneutronic fusion for a proof of principle test and then for large scale fusion reactions using boranes, such as decaborane, in which the full molecule decomposes in a plasma, yielding monatomic boron ions to fuse with a proton beam.